The AI revolution has driven a surge in data center development, with over $450B invested globally in 2025 alone. In parallel, declining hardware costs (especially GPUs and related microchips) and improved models have also created significant opportunities for more computation at the edge, a strategy that is increasingly attractive as centralized data centers face siting bottlenecks and construction delays. Edge computing, where data is processed close to its source, has the potential to unlock value propositions in energy, mobility, and industry where cloud-only strategies are insufficient, but there are several roadblocks that must be overcome to realize this potential.

Key Takeaways

Low latency requirements in specific use cases combined with improving algorithms and compute capabilities at the edge are unlocking new value in energy, mobility, and industrials. Robotics & autonomous vehicles, industrial process control & optimization, and microgrids are a few promising areas where edge computing capabilities add value.

The edge computing stack is maturing, but hardware and software roadblocks still exist. Device constraints, edge-to-cloud orchestration, and interoperability are pain points for customers and opportunities for innovation.

Designing effective edge-cloud hybrid architectures will be key to realizing value. The most successful deployments will leverage the respective strengths of edge and cloud models rather than treating them as substitutes.

If you’re an early-stage founder building software in this space, we’d love to hear from you! Contact us.

What is edge computing?

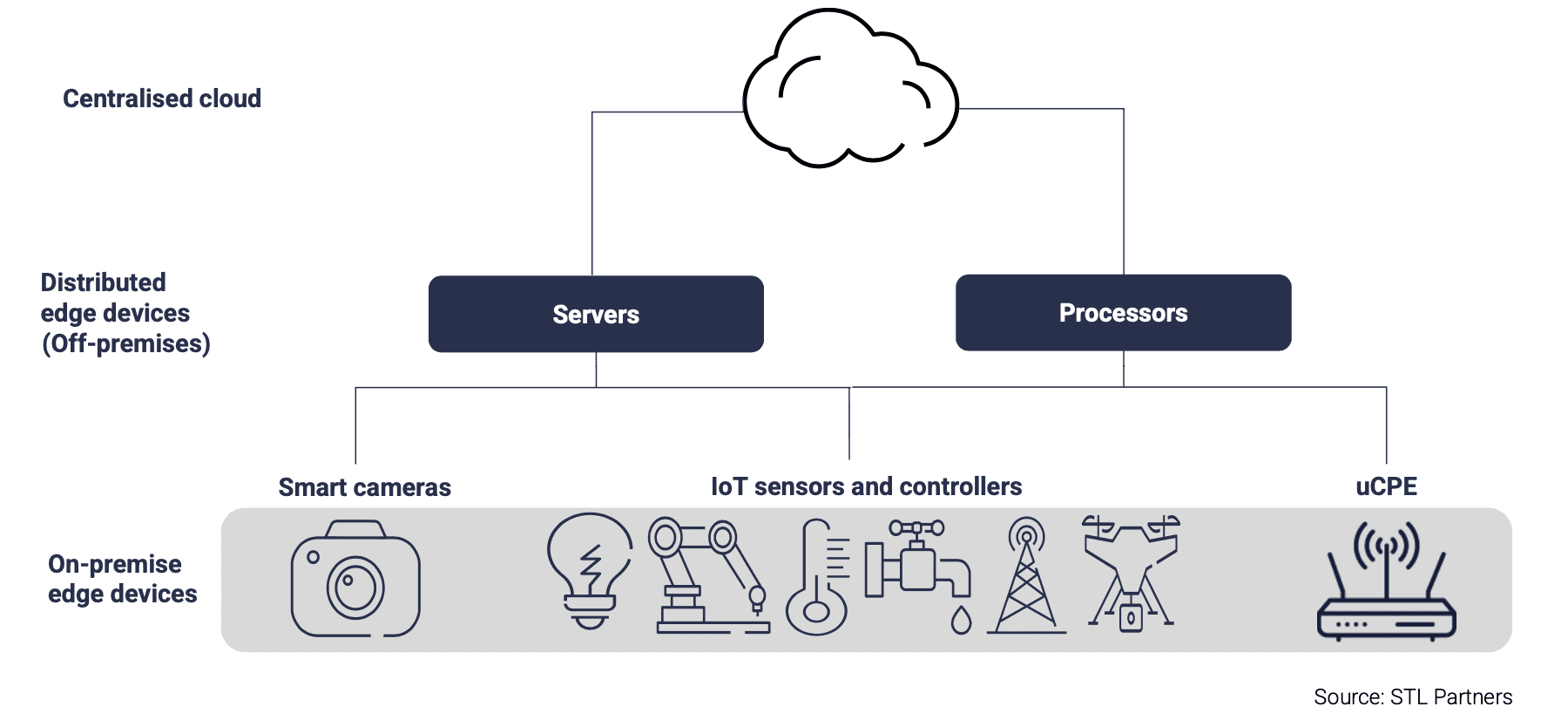

Edge computing refers to a model of computing where data is processed as close to the source as possible. Instead of processing data at a centralized data center, edge computing systems handle it locally, in near-real-time. The visualization below provides a simplified framework for the various elements of the edge computing stack.

Figure 1: Edge Computing Stack (Credit to STL Partners)

Key Definitions:

Centralized Cloud: data centers that host core services, databases, and applications for devices across the entire system

Distributed Edge Devices: edge data centers consisting of servers and processors deployed close to operational sites that process data locally rather than in a central cloud

On-Premise Edge Devices: devices that collect and process data at the edge as well as the devices that connect and control edge systems

Recently, advances in AI/ML algorithms (including GenAI) as well as the reduced cost and increasing power of edge compute hardware have unlocked additional use cases at the edge. In their 2026 Outlook for Edge AI, Dell highlights this trend by showing that, as of 2024, 73% of surveyed organizations were actively moving their AI inference workloads to the edge. They expect this trend to continue in 2026.

Despite excitement about the potential of edge computing, it’s important to emphasize that the cloud is an incredible resource with many performance and cost advantages that come with scale. Furthermore, past deployments of edge devices have been hampered by a fragmented ecosystem of stakeholders, high complexity and cost, and limited ROI (largely due to failures to effectively process data collected at the edge).

Given this dynamic, it’s essential for businesses to prioritize use cases where edge deployments can create value and complement cloud strategies. The framework in the following section presents our perspective on key factors to consider.

How edge computing can unlock value in our investment areas

Across our investment areas — energy, industry, and mobility — three key factors influence the value creation potential of edge computing:

Latency and Connectivity: Reducing (or even eliminating) the amount of time it takes for data to move from one point to another (or round trip) enables near-real-time decision-making and adjustments. Local systems are also insulated from bandwidth and connectivity issues which can improve reliability. These factors are especially valuable for safety-critical processes.

Privacy and Security: Handling data locally eliminates transmission-related privacy concerns. However, this benefit must be carefully balanced against a greater number of devices that can be hacked.

Cost Tradeoffs: Edge computing systems don’t benefit from the same economies of scale as larger data centers, but this can be (partially) offset by reducing data transmission costs.

Energy

Near-zero latency capabilities unlocked by edge computing allow energy systems to make decisions in real time. This benefit is particularly salient for microgrids and energy management systems. Processing generation and storage data locally eliminates the dependency on external systems for operations and empowers local systems to dispatch energy, balance loads, and optimize charging schedules. In the centralized grid, edge devices can enable smart grid management and demand response by instantly balancing supply and demand and managing voltage fluctuations. Utilities can also deploy edge compute-powered robots for asset inspection, keeping frontline workers safe from potentially dangerous jobs.

Mobility

In the mobility sector, ultra-low latency capabilities are required for autonomous vehicles. Edge computing provides the instantaneous processing power to analyze and make decisions on sensor inputs in real time, which is required for an autonomous vehicle to react to its environment and operate safely.

Industry

Integrating edge computing can improve system throughput for industrial processes while reducing costs. On manufacturing lines, edge computing deployments complement existing Manufacturing Executing Systems (MES) and Supervisory Control and Data Acquisition (SCADA) systems, enabling computer-vision-based quality assurance, allowing robots to quickly adjust when quality issues arise, and ensuring that critical processes continue to run optimally in the event of network interruptions. Outside of the manufacturing line, edge computing can help robots move products or equipment around plants by leveraging computer vision capabilities to improve reaction time and increase safety.

Roadblocks & unlocks for edge computing

Although edge computing possesses the potential to unlock new value for businesses, three key roadblocks can hinder adoption: 1) deploying AI & ML models on resource constrained devices, 2) edge-to-cloud orchestration and device management, and 3) a fragmented edge ecosystem that limits interoperability.

In addition to these pain points that will be explored in more detail below, the following challenges also represent opportunities for innovation:

Managing cybersecurity across a dramatically increased attack surface at the edge

Developing mechanisms that enable fault-tolerant, automatic operation

Collecting and processing data for AI training

Pain Point #1: Deploying AI & ML models on resource constrained devices

AI & ML models are becoming increasingly efficient, and compute resources are becoming more powerful, but the physical size of edge hardware remains an inherent constraint. Many small devices such as wearables, drones, and sensors lack the space required for full-size GPUs and batteries, limiting their ability to perform complex calculations even with today’s most optimized models and hardware. In a fleet of edge devices with varying hardware capabilities, many versions of the same model may be required.

We see the following opportunities for software to address this pain point through:

Model compression tools that maintain model accuracy at a reduced model size

Energy and power optimization capabilities to increase compute throughput on constrained devices

Startup Spotlight:

LatentAI’s Efficient Inference Platform (LEIP) allows developers to compress AI models while maintaining accuracy and optimizing them for edge deployment.

Pain Point #2: Edge-to-cloud orchestration and device management

The scale and distribution of edge devices creates complexity not seen in centralized computing systems. Allocating workloads between edge systems and the cloud, synchronizing data between systems (especially in the face of intermittent connectivity), and managing a distributed fleet of thousands to millions of edge devices creates substantial challenges.

The following solution sets are well-suited to address these challenges:

Allocate workload placement between edge devices and the cloud for latency, cost, and compliance

Automate conflict resolution for edge-cloud data synchronization

Provide zero touch provisioning and lifecycle management to avoid costly trips to the field

Startup Spotlight:

Barbara’s Industrial Edge Platform is specifically designed for energy, utilities, and manufacturing use cases, and allows businesses to store, visualize, and process data at the edge or in the cloud depending on their specific needs. Barbara also provides a centralized device management tool to remotely provision, configure, update, operate, and decommission edge devices across all sites.

Pain Point #3: Lack of interoperability

Today, the edge ecosystem is fragmented. Proprietary solutions create vendor lock-in as each device may have unique software, firmware, and communication protocols that do not (easily) interact with devices from other vendors. Legacy systems and modern edge stacks are difficult to integrate, and systems often require the use of many code bases. Open standards (ETSI MEC, Margo) and frameworks (Linux Foundation's Project EVE) for edge devices are emerging, but there is still an opportunity for multi-vendor middleware platforms to add value.

In addition to establishing open standards, edge computing would benefit from:

Standardized APIs for multi-vendor, multi-cloud edge environments

Compliance testing toolkits that ensure cross-vendor compatibility

Containerized applications that run on any edge infrastructure

Startup Spotlight:

Atym is bringing WebAssembly-based software containers to resource-constrained edge devices that cannot run Linux or Docker, which enables developers to build in familiar languages while still targeting a wide range of chips and operating systems for deployment.

Powerhouse Ventures’ Perspective: edge computing is primed to complement cloud strategies

With improving software models and falling hardware costs, edge computing will become a viable solution for a wide range of use cases within energy, mobility, and industry. Now is the time for leading organizations to identify their highest value applications, begin understanding their requirements for effective edge-cloud hybrid approaches, and position themselves to adopt and implement effective edge strategies.

If you’re an early-stage founder building software that enables edge computing in energy, mobility, or industry, or if you’ve been thinking about this space, we’d love to hear from you! Contact us.